If you’re already tracking AI visibility, you’ve probably noticed how much has shifted this year. The way large language models reference brands and products isn’t static anymore. It’s evolving in a way that finally makes visibility measurable at a useful level.

In 2026, assistants like ChatGPT, Gemini, Claude and Perplexity have become more transparent with how they cite and attribute information. Platforms are opening their logs, and new tools now segment visibility by model or prompt type. For the first time, you can see how performance changes across phrasing and platforms. What used to be invisible is now a data layer you can actually work with.

The New Metrics That Actually Show Visibility Momentum

Early AI visibility tracking focused on one question: does the model mention my brand? That’s still a baseline, but it doesn’t tell you how your visibility is changing. The real progress comes from momentum. Are you gaining trust across engines, or just holding steady?

Citation Share Trend (CST)

Your share of citations compared to competitors over time. This is one of the clearest signs of traction. A rising CST means models are choosing you more often because your pages are better aligned with user intent and machine-readable context.

Prompt Stability Index (PSI)

When you test a batch of similar prompts, a high PSI means the model consistently recognizes your brand. A low score usually points to entity confusion or missing sameAs connections. It’s a good way to spot whether your brand identity is stable across different engines.

Evidence Alignment Rate (EAR)

This tracks how accurately a model uses your content when it does cite you. If it attributes statements that don’t appear on your page, that’s a sign your structured data or schema markup isn’t fully synchronized with your copy. A strong EAR means your content is seen as reliable evidence.

Cross Model Citation Density (CMCD)

Visibility across multiple AI systems is one of the most useful trend markers in 2026. If you’re showing up in Gemini but missing in ChatGPT or Claude, that imbalance highlights dependency risk. Widening your coverage builds long term resilience.

Visibility Velocity (VV)

How fast new or updated content earns its first citations. When it’s slow, something is blocking recognition. When it’s fast, your site structure and schema are likely clean and trusted. It’s a momentum metric worth watching each quarter.

Together, these signals create a full picture of how AI models understand and prioritize your content. Visibility isn’t a switch that’s on or off anymore. It’s a dynamic footprint that expands as your trust signals improve.

The 2026 Tool Landscape

The tools that track AI visibility have matured quickly. What started as simple mention counters has turned into multi-layer diagnostic platforms. Many of the newer systems combine citation data with schema evaluation and entity mapping, helping marketers understand why visibility rises or falls.

A growing number of dashboards now support prompt simulation, where you can feed a structured set of prompts into different engines and see how citations vary. Some even highlight differences between factual queries, comparisons, and transactional questions so you can isolate weak spots in your content structure.

The other big shift is competitive benchmarking. Instead of viewing your own mentions in isolation, you can now measure share of voice among direct competitors. This is how AI visibility becomes a true performance metric rather than an experimental curiosity. You can measure, compare, and adjust with real context.

For a broader perspective on how models handle attribution, the OpenAI guide on how ChatGPT sources and develops answers and Google’s Gemini API documentation are useful reads. They show how retrieval systems surface citations based on structured and contextual signals.

What “Good” Looks Like Right Now

Benchmarks are still forming, but enough data is circulating to give shape to what strong performance looks like. These ranges aren’t fixed rules, but they help anchor expectations.

- CST: a 15 percent increase quarter over quarter signals meaningful progress

- PSI: above 0.7 shows consistent entity definition

- EAR: above 85 percent suggests models are citing you accurately

- CMCD: appearing in at least three major assistants means you’re building cross model credibility

Treat these as directional indicators, not universal targets. Large language models retrain often, and their citation logic changes with every update. The point is to see movement in the right direction. Growth in multiple metrics means your entity signals and content quality are improving in tandem.

From Tracking to Action

Data only matters when it drives change. Once you begin collecting visibility metrics, you’ll start spotting patterns quickly. Each one points to a different kind of optimization.

If your Prompt Stability Index is weak, check for inconsistent naming, missing schema, or incomplete sameAs references. Consistency across your domain, social profiles, and structured data helps models link everything together.

When Citation Share Trend flattens, refresh the evidence within key pages. Add concrete facts and clear context high on the page. Models prioritize declarative content that mirrors the structure of a direct answer.

Low Evidence Alignment Rate often means your schema doesn’t accurately reflect your visible content. Align your markup with what’s on the page so models can trust what they parse.

If new content takes too long to appear in AI answers, review internal linking and page hierarchy. Models weigh crawlability and semantic connections, even when they’re not indexing in the traditional search sense.

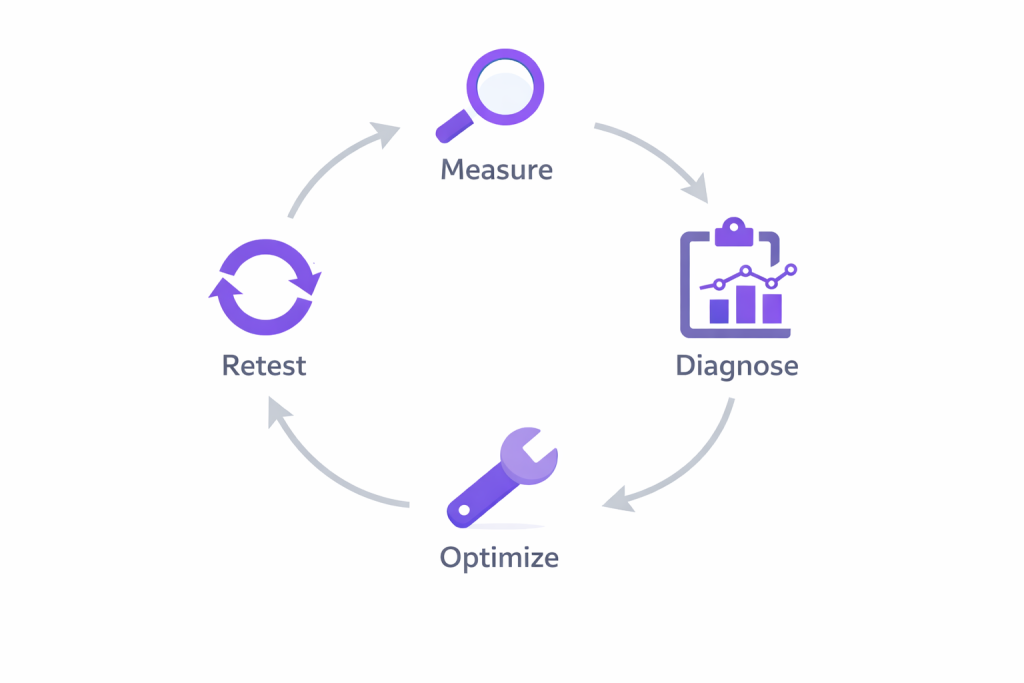

A simple rhythm helps keep progress visible: measure, diagnose, optimize, retest. Repeat it quarterly to track whether visibility changes correspond with updates you’ve made.

Benchmarking Reality

An interesting trend this year is how some visibility tools now highlight why a citation dropped, not just that it did. They separate losses caused by missing evidence from those triggered by entity overlap or outdated schema. That’s the kind of diagnostic detail that helps you take action instead of guessing.

These insights make AI visibility feel more like a living competitive analysis than a static metric. You’re no longer asking how to rank higher but how to become the more trustworthy and clearly defined entity within the model’s knowledge graph.

Closing Thoughts

AI visibility measurement is settling into its own space. With transparent citation data and better diagnostic tools, marketers can now track how trust and structure impact their brand presence inside AI systems. The question is whether the right page is being cited for the right reasons.

Understanding why a model chooses your content is the key to staying visible as assistants evolve. That’s the real advantage of measuring what used to be invisible.

Karaya helps brands measure, compare and strengthen their AI visibility through competitive intelligence and structured content analysis.

Leave a Reply